Provocation 4

There is no such thing as AI-proof.

Be it assessments, careers, or anything else, the ubiquity of AI and pace of growth means AI-proof is a problematically alluring impossibility.

The arrival of GenAI left educators in the stressful situation of dealing with a new and rapidly changing situation and left many wishing there was a way to hit a pause button. A way to carve out some part of education where AI won’t cause disruptions. A way to just keep things the same as before. None of that is possible, though. AI isn’t inevitable, but it is increasingly unavoidable. There is no such thing as AI-proof.

Actually, that isn’t entirely true. With enough effort, you might be able to create an artificial bubble in which AI cannot directly impact student work. In this magical land of blue books, handwritten assignments you can live a happy life free from AI. Assuming of course, that students are only allowed to think and work under your direct supervision so we can be sure it is all their intellectual output. Not to take this hyperbolic situation too far, but I think that might mean some Orwellian 1984 style home surveillance cameras so that students don’t get any unapproved thinking in outside of school hours. The real issue is that not only is there no such thing as AI-proof, efforts to achieve AI resistance will run in to the same problems as attempts to control AI cheating.

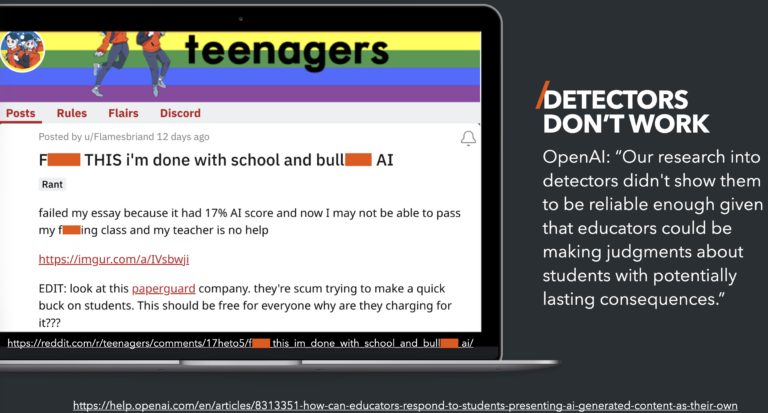

Trying to “proof” assessments was an issue before AI as well. Higher education tried really hard–and paid lots of money–to try and create a secure environment for online testing during and after the pandemic shutdowns, but in the end it just ended up creating an adversarial relationship with students. This has been a long-standing complaint about third-party test security companies as the Electronic Frontier Foundation (EFF) detailed in 2021. The focus on security has become so single-minded that even accommodations for lactating mothers for medical board exams were available in less than half of the exams in 2021, and still not fully supported in 2024. In a scathing rejection of the police state being imposed by proctoring companies, a 2022 Michigan State Law Review paper made the issue clear: “In addition to its impact on privacy, students’ well-being, and fairness, software specifically devoted to remote proctoring is simply not necessary to assess students’ knowledge or skills remotely.”

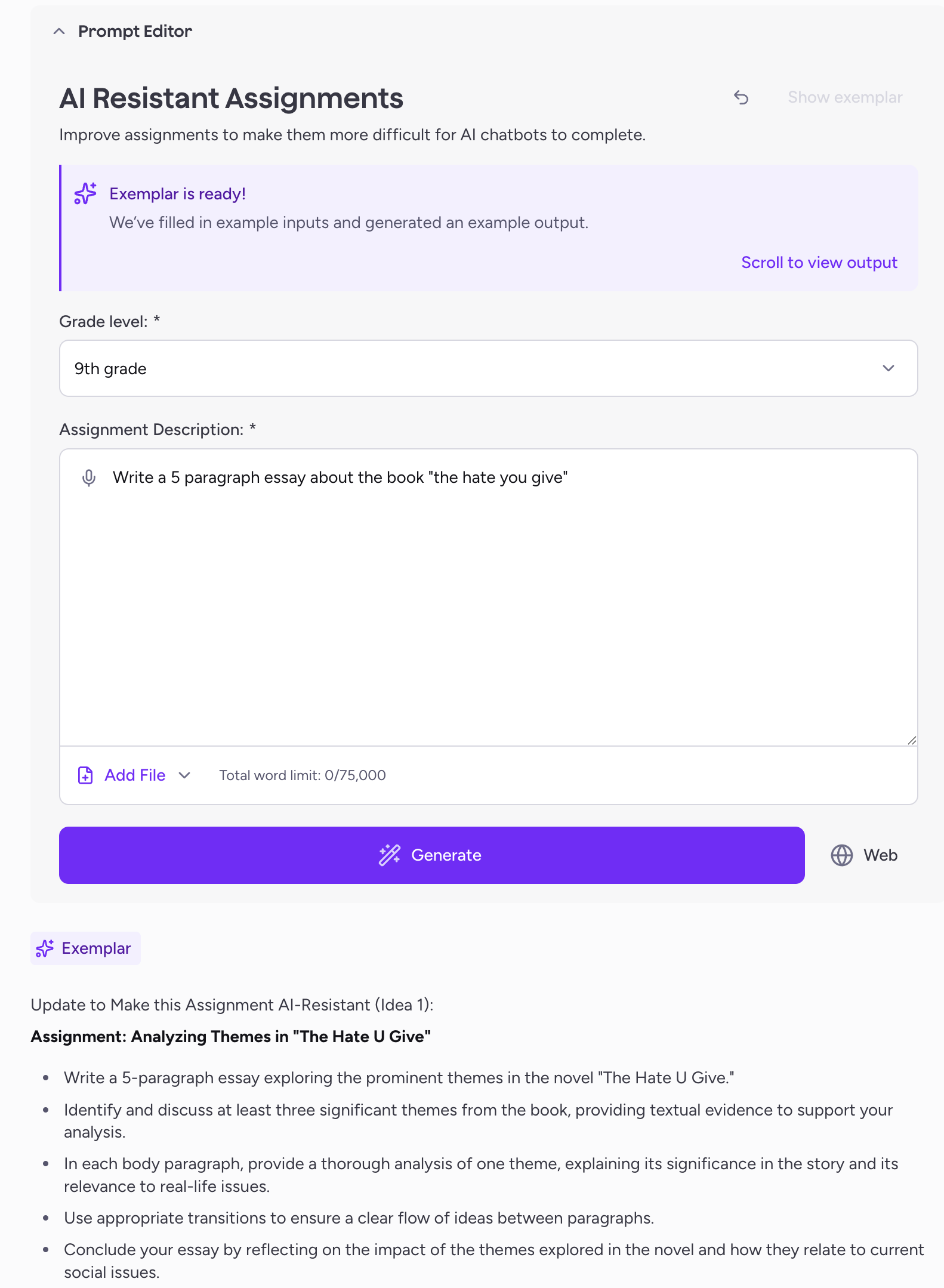

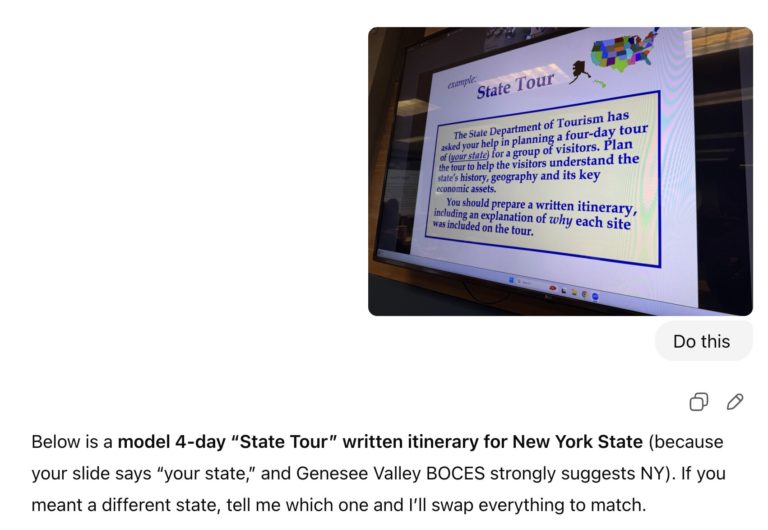

Yet the dream of developing AI-proof, or at least AI-resistant, assessments is incredibly alluring as evidenced by many posts to social media by teachers and professors. Invariably, these requests for a “banging assignment that is AI-proof” devolve into other users responding with all the reasons why each suggested assignment won’t actually work. Even the AI products being sold to schools advertise tools that can make assignments AI-resistant. MagicSchool has one such tool that uses AI to try and rewrite assignments to be AI-proof. To be blunt, it doesn’t work. All it does is take a poorly conceived and explained assignment like the MagicSchool exemplar of “Write a 5 paragraph essay about the book ‘the hate you give’[sic]” and prompt engineer it into an assignment that an AI tool will more effectively complete. A teacher who lacks the AI literacy to understand what these expanded prompts are actually doing for AI tools will find this quite problematically alluring.

To extend Provocation 2, validity doesn’t just matter more than cheating, it matters more than being AI-proof as well. In his article that was shared in the prior post, Phillip Dawson’s expansion of the concept of validity “moves us from a focus on just the instruments of assessment to also consider the implications of the assessment on what students do.” As he goes on to note: “A take-home authentic assignment might be vulnerable to all manner of unintended student behaviours, but it may have better face validity than a high-security multiple-choice exam.” In other words, giving up on the notion of being able to create AI-proof assignments, may give us the freedom to actually conceive of better assignments that give us more insight into student learning.

The difficult truth is that the ridiculously high-security environments that have been created in the name of assessment validity don’t actually match anything in the real world of work that students are facing. Regardless of the field, I cannot imagine an employer finding value in a worker who locks themselves away in a room, refuses to ask for help with anything, doesn’t communicate with colleagues, and rejects available tools that could help them be more productive and effective. Yet that is what we do to students in the name of assessment security and our quest for AI-proof assignments.

Instead our goal should be to identify ways to assign and assess work where the use of AI doesn’t matter because it doesn’t detract from the validity of the work product. That is the real solution for an AI-proof assessment; a situation where the validity and our gathering of evidence isn’t impacted by whether or not a learner uses AI. So why isn’t that bold statement followed by a helpful link to a set of “banging” assignments that qualify? Because truly valid assignments and assessments are–like all of this–a wicked problem that must be solved within a local context. “Item banks are typically disconnected from the enacted curriculum. As such, they provide decontextualized evidence that teachers often do not know how to interpret to inform whether they should adjust or monitor what they are currently teaching” states the National Center for the Improvement of Educational Assessment.

So once again we come to the end of a post about The Rochester Provocations and reframing the conversation around AI in education without a simple solution. As frustrating as it likely is for you, the reader, the truth is that there isn’t a good solution to this wicked problem. In “Talk is Cheap” Corbin offers us a basic starting point for addressing issues around this in comparing discursive approaches to AI like statements, stop lights, and policies as compared to structural changes like controlling the setting, mechanics, and other physical aspects. The suggestion is a two-lane approach that differentiates between secure and open assessments that allow teachers to have both “assessment of learning” and “assessment as learning”. Rather than an item bank, The University of Sydney has created a more generic assessment type bank to help teachers develop different approaches that I think offers a great starting point for K-12 as well.

Instead of chasing the alluring impossibility of AI-proof, let’s shift our efforts to instead improving assessments in general. I think the University of Sydney assessment types page sums it up nicely in their guidance to instructors. “Open Assessments are unsupervised assessments that provide students with authentic opportunities to receive feedback on their learning utilising any helpful resources and contemporary technologies. AI use may be purposefully incorporated, where helpful, to support students in developing disciplinary knowledge and skills alongside AI literacy. These focus on assessment for and as learning.” This isn’t at all about AI-proof, it is about good and valid assessment practices that end up being AI-agnostic.