Provocation 3

Our assessments were broken before AI.

We need to rethink how we understand and implement assessment including an emphasis on developing and implementing valid assessments in pre-service teacher programs.

Patrick Whipple, the Director of Professional Learning Services at Genesee Valley BOCES, teaches a course at Niagara University on assessment for pre-service teachers. At the convening he talked about the challenges pre-service and new teachers face in assessing learning. The assessment issues are not new; they certainly cannot be blamed entirely on GenAI even if that technology has greatly exacerbated the problems.

Lew and Nelson (2016) examined this issue a decade ago in a paper investigating the intersectionality of culturally responsive teaching (connecting with students), classroom management, and assessment of learning. They noted a long history of research on a lack of assessment literacy amongst new teachers including a Black and William (1998) study that addressed a “‘poverty of practice’ among teachers, in which only a few teachers have fully understood how to implement classroom formative assessment”(Lew & Nelson, 2016). GenAI has made all three of these practices more critical and simultaneously more challenging.

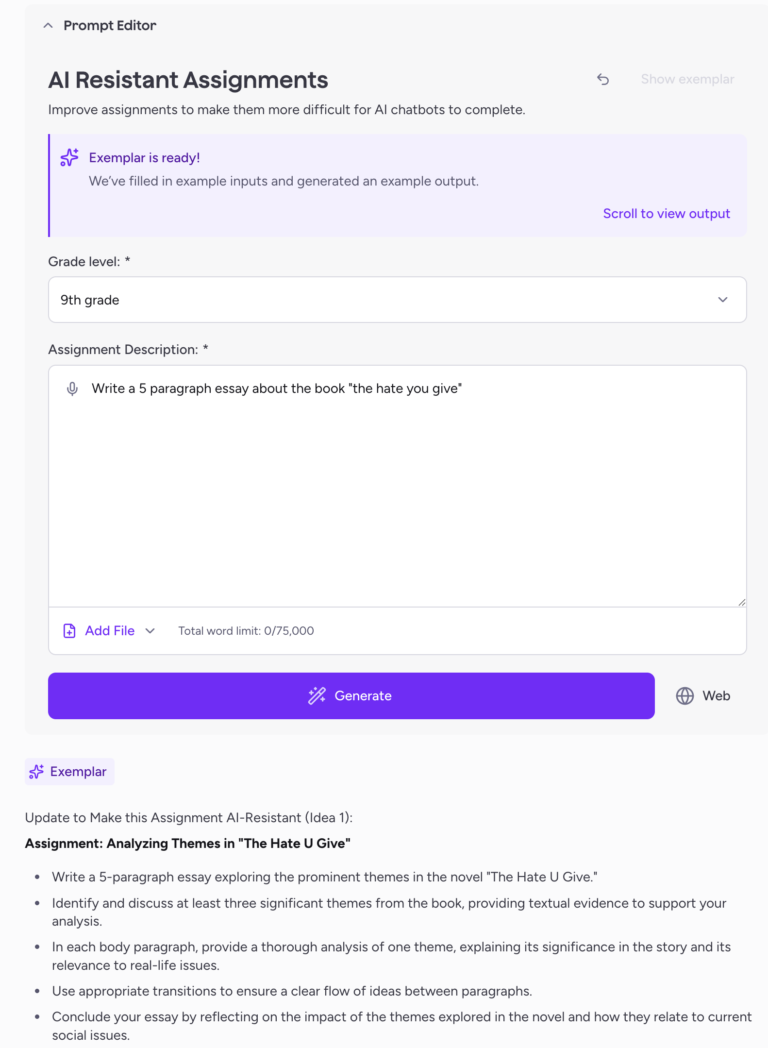

One change that is already taking place in New York is a shift towards performance-based learning and assessment. This is a cornerstone of the NY Inspires initiative, along with a shift to focusing on a new Portrait of a Graduate. One issue, however, is that the concept of what a performance-based assessment is never clearly defined. Much of the understanding around performance (or project?)-based assessment has been developed in the days before AI.

An example is McTighe’s (2020) Designing Authentic Performance Tasks and Projects. There are great resources and ideas in this book, but much of the thinking is grounded in the idea that completion of a task is evidence of student work on the task and therefore can be considered evidence of student learning on the content embedded in the task. Then AI arrived and rendered these assumptions obsolete. Instead we now need to reconsider performance-based tasks in terms of a focus on the process of learning rather than just the submission of a product, even if it seems like a really good product.

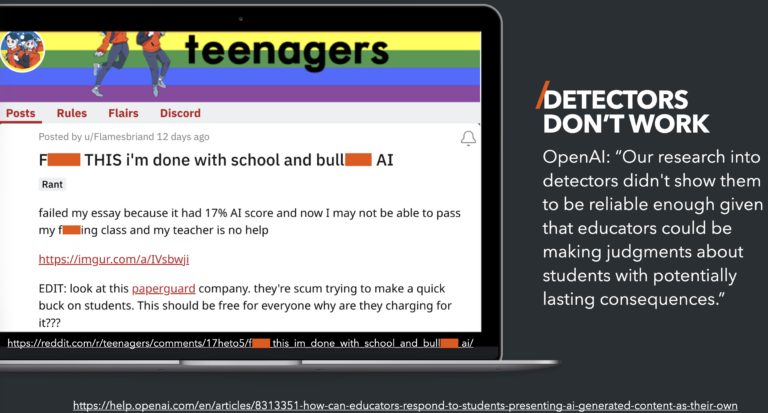

In a recent training led by Jay McTighe, I took sideways pictures of example tasks on his slides and submitted those photos to ChatGPT along with the highly engineered prompt of “do this.” ChatGPT understood that I wanted it to do my homework and helpfully completed the entire performance-based task in under a minute. The underlying problem is that this was always somewhat of an issue. Consider the classic elementary volcano learning task. A performance-based assessment of students’ understanding of volcanic processes is measured by their ability to construct a model that captures their learning. But does it? Or does this turn into another diorama project that tells us more about equity issues and the socio-economic status of families than anything curriculum related.

Process over product is great place to start in terms of reimagining both the assessment tasks and scoring, but I think it probably goes deeper. There is existing research on the development of a growth or learning mindset and the impact of resubmissions of work (mastery learning) and feedback during the work process. By de-emphasizing the product and focusing on the process, we can find ways to make our assessments valid even in this age of AI.

In our expert panel work, Thomas Corbin stressed that this isn’t easy. He told the story of a colleague that came to him asking about how to change assessments to still work with AI. Each possible solution introduced new issues, a clear indicator that this is a wicked problem. Corbin also talked about his paper “On the essay in a time of GenAI” where he examines a shift from the essay as a product to the essay as a process of learning while writing over time. He laments the downfall of the once glorious essay to a formulaic five-paragraph summary of content. In other words, the essay as an assessment was broken before the arrival of AI.

Consider the volcano project in terms of Corbin’s discussion about the essay as a writing and learning process vs. writing a submission product. NASA’s Jet Propulsion Laboratory has a volcano project that is process-based. Instead of submitting a finished volcano, students use the process of mapping multiple eruptions of baking soda and vinegar over time to memorialize the “lava” flows in clay and construct an understanding of how a volcano grows over time. By journaling in writing/audio/video their growing understanding and highlighting changes in their ideas or expectations, students’ learning can be captured as an assessment artifact.

What is clear is that the presence of issues around assessing learning isn’t a new AI-driven phenomenon. Our assessment practices have been problematic for a rather long time. As was noted in a historical review of educational assessment from 2022: “This brief history bringing us into early 21st century shows that educational assessment is informed by multiple disciplines which often fail to talk with or even to each other. Statistical analysis of testing has become separated from psychology and education, psychology is separated from curriculum, teaching is separated from testing, and testing is separated from learning. Hence, we enter the present with many important facets that inform effective use of educational assessment siloed from one another”(Brown, 2022).

Before the arrival of AI, assessment was already a challenge. AI has exacerbated that challenge by removing the foundation upon which most assessments are built; that the work product as submitted by students is valid evidence of student learning and understanding. And yet, since none of this is new there is existing research in which we can (re)discover potential solutions. For example, work around feedback literacy in students and shifting feedback from a summative to formative point so that it can inform future work. Both of these ideas support a shift from product to process and submission to learning.