Provocation 1

There is no AI problem.

Education itself is a wicked problem; assuming there is an AI-in-education problem risks us thinking there is an AI solution.

AI arrived in our world and our schools in late 2022, and we have been talking about it since then. Too often, though, the conversation has focused solely on the technology itself, and not the wider situation we are working within. As Neil Postman noted in a speech on technology changes, they will be ecological, not simply additive. The entire situation within the world will be changed by the release of a major new technology. Our response must similarly consider the wider situation.

And so our provocations begin with the statement that “There is no AI problem.” That isn’t to say that we didn’t talk about very real concerns related to generative AI at our grant project convening in early December, 2025, but we also went much further. Thanks to funding from Google, we were able to bring together a wide range of fields and perspectives so that we could build a better understanding of where education is going as we adapt to the emergence of generative AI.

What we quickly determined is that we are facing a wicked problem in education. Wicked problems are defined as being difficult or impossible to actually solve because the issues and the desired solution states are ill-defined, shifting, and tangled with other problems[2]. Wicked doesn’t refer to a problem being evil, but rather the level of challenge it poses. To reduce what we are facing right now to a simple “AI problem” risks us being sold a simple “AI solution” that doesn’t actually address any of the underlying issues.

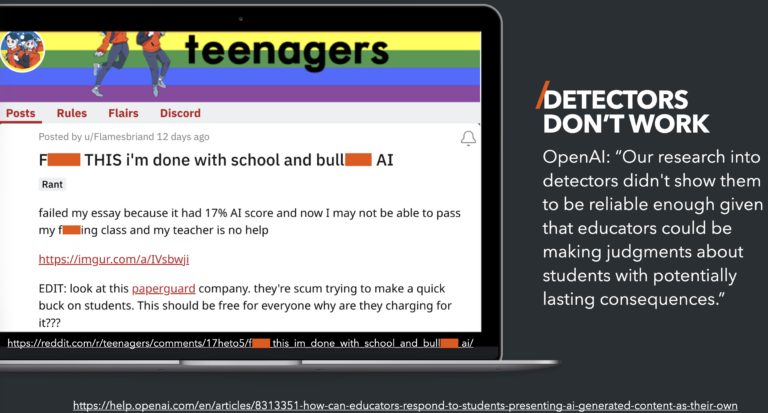

What I have heard from the wider educational field over the past couple of years are concerns about AI. Teachers are worried that students are “cheating” or offloading work onto AI tools. Principals are seeing AI-driven disciplinary issues related to AI nudity tools. Counselors are concerned about the addictive nature of chatbot companions. So doesn’t this list of issues mean we have an AI problem?

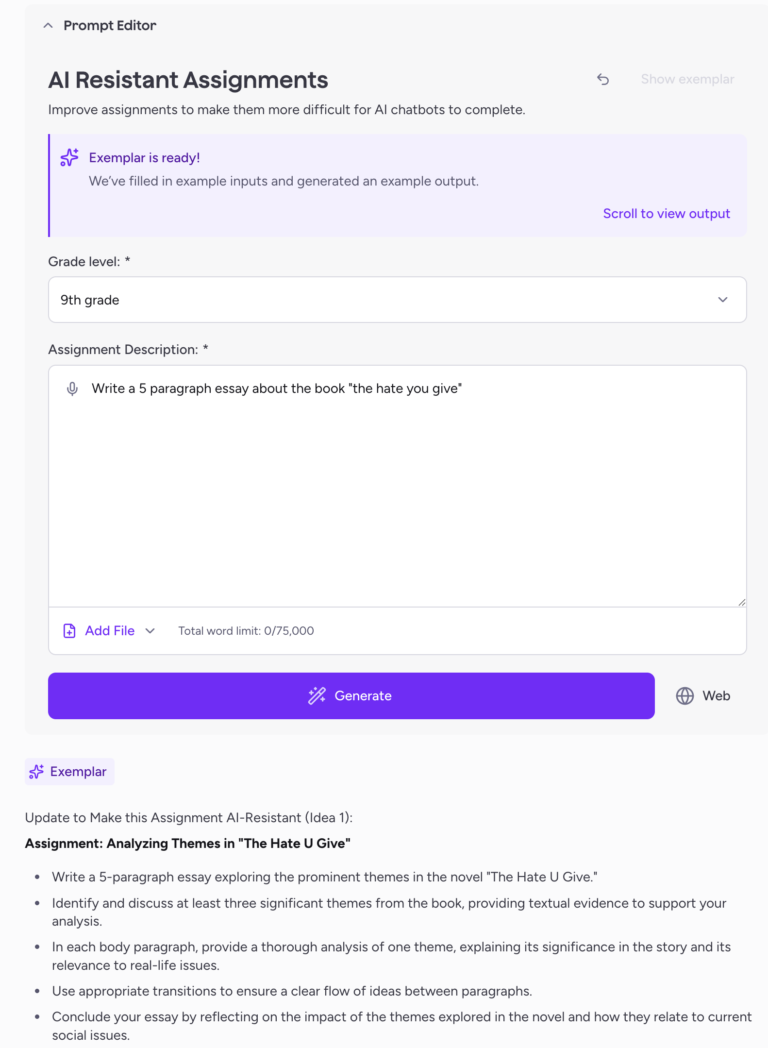

Not really. Though AI has exacerbated the issues, there were real problems with how we assign and assess student work before the arrival of GenAI. As we will explore more deeply in a later provocation, tools like PhotoMath and Google Translate have been an issue for more than a decade. Similarly, social media and sexting have been disciplinary issues for over a decade. Finally, as the recent focus on social media regulations and cell phone bans show there have been mental health worries for quite a while as well. So no, there isn’t an AI problem, there is a wicked problem in education that includes a broader concern about a variety of technologies but also many other issues.

That is where we wanted to focus as we started constructing the logical argument housed within the eight provocations. Unfortunately, we will not be able to offer any clear solutions. The wicked nature of the problems we face means any solutions must be investigated locally to see where they stand on a continuum of better to worse. It also must be accepted that some solutions will introduce new, unintended problems. And yet we must work on this problem. Each of us in our local situations have to explore what we are facing and find a way to start addressing things.

Brookings recently released a report on AI in education that was widely touted for concluding that the risks of AI in education currently outweigh potential benefits. While that was indeed the conclusion, the report doesn’t end there. It actually concludes with a call to action for all of us to work together to identify possible paths forward to find balance between the benefits and risks of AI usage without giving in to the risks of the harms.

We started with the statement that there is no AI problem not to dismiss or diminish the real risks that AI brings to education, but to avoid the even greater risk of thinking that there is a simple solution available. While I have seen some benefits in local schools from the New York State bell-to-bell ban on cell phones, I worry that this isn’t a real, long-term solution to the “cell phone problem.” Like AI, cell phones are part of a wider ecosystem of issues in education, society, and technology.

A pause on cell phone access to allow schools an opportunity to investigate and address the underlying issues can work, but only in the short term. Don’t forget, that as with AI, students are going home to a world of often unrestricted phone access. If we don’t make use of the limited hours of [supposedly] banned cell phone time from bell-to-bell to educate and inoculate youth to the dangers that led us to ban phones then what hope is there for long-term change?

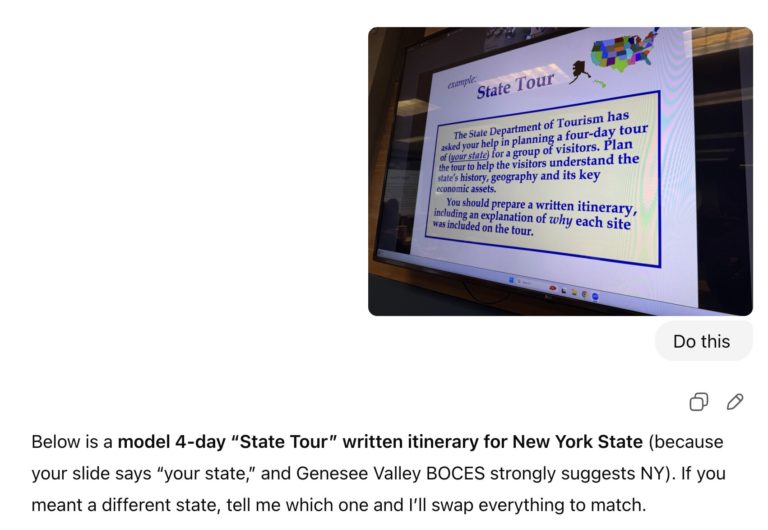

We are in an odd space right now when it comes to AI. As Justin Reich noted in an MIT report, and expounded upon in a follow-up paper, AI is an arrival technology. Schools didn’t adopt AI, but it showed up and rapidly infiltrated our learning spaces and now we have to deal with it. But I would extend this theory to say that the real issue with arrival technologies is that they exacerbate pre-existing issues. AI didn’t create the assessment challenges we face, but it certainly laid bare the issues in how we have been assigning and assessing student work in recent years. That is the foundation for this provocation and the basis for our wider approach.

As the first provocation, the statement that there is no AI problem sets up the argument to come. Over the next few statements we address practices within the field around assessment and learning. In the later half of the provocations we shift to a policy perspective to tackle some bigger issues before concluding with a call back to this first statement. This isn’t strictly an AI problem, but avoiding both AI and the underlying issues is simply not an option.